Modernizing Events Platform At ShareChat

Introduction

At ShareChat’s Central Data Platform (CDP) team, we cater to more than 1000 different event types from 5 different apps, receiving and ingesting more than 80 billion events per day on a regular basis. At peak of a regular day, our kafka cluster handles more than 2 GB/sec of compressed throughput!

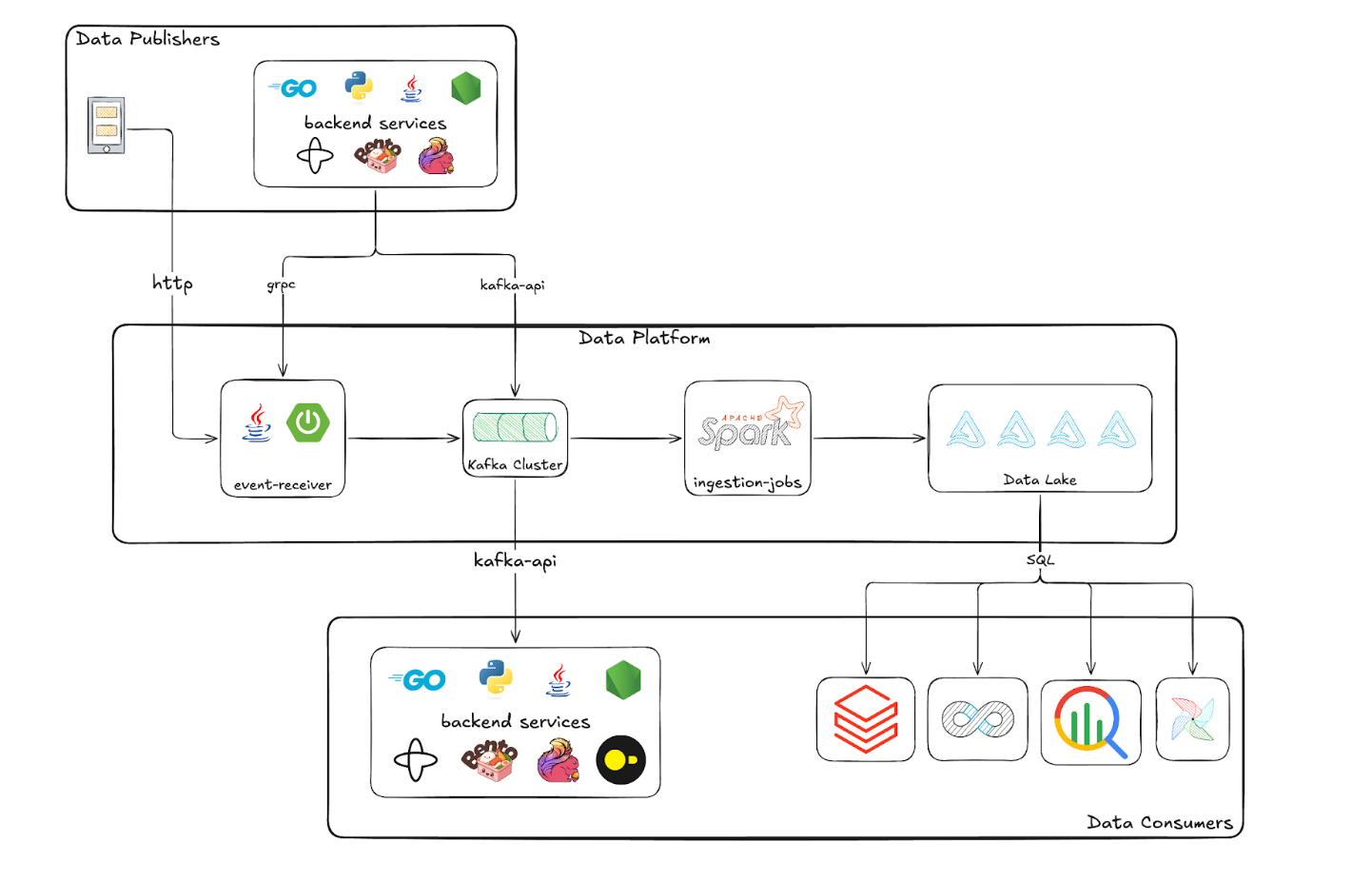

CDP is responsible for enabling various ways of publishing and consuming data. For publishing events, we support http, grpc and kafka protocols. For consuming data, we support kafka and sql protocols.

The system handles such a scale with high resiliency and low cost. This has been possible due to multiple deliberate architectural choices.

On top of the scale of the events, we also had to solve for the scale of event types. Having these many event types pushed us to come up with generic solutions that allow us to cater to these many event types without custom handling per event type.

The Shift

Till mid of 2025, our events platform was fragmented into these event processing flows:

- Majority of the events are received in JSON format and processed end-to-end in JSON format.

- Onboarding to this flow used to require developers to raise PR in a git repo, get it approved, merge it, wait for the build, deploy the build.

- This process will create/update tables in our data lake and setup ingestion pipeline for the event being onboarded.

- A small subset of high scale events are received in JSON, converted to protobuf format with code written by developers, and processed in protobuf format in all downstreams.

- Onboarding to this flow used to require developers to raise PRs in multiple git repos, get approval for them, and deploy them.

- PR in warehouse schema manager repo to add fields in the warehouse table

- PR in our central protobuf contract git repo to onboard protobuf contract.

- PR in the service where we were converting JSON payloads to protobuf format.

- Not just the efforts, but this process was error prone as well. Types in protobuf contracts and warehouse tables need to be compatible. For example, if the field's type in protobuf contract is

int64and in delta lake the corresponding column’s type isint(a 32 bit integer), then the ingestion will fail. In delta lake, we need to use thelongtype to be able to ingest data from anint64field from protobuf.

- Onboarding to this flow used to require developers to raise PRs in multiple git repos, get approval for them, and deploy them.

Till the beginning of 2025, the major focus in all the teams at ShareChat was to increase efficiency and reduce cost of our infra. In this journey, the optimizations needed quick work and platformization needed to wait.

Starting 2025, this focus was shifting from cost saving to building new revenue streams. We needed to make sure that the product engineering teams can make features fast and efficiently! And for that, all the hurdles mentioned above needed to be removed.

This was a long journey and it can not be covered all in a single blog post. So over the next few weeks, we are going to share our learning that we had while modernizing the platform for scale, cost and developer experience. If this interests you, stay tuned!